How to Clone a Postgres Helm Release on GKE

Robin Storage on Google Marketplace

In this tutorial, we will create a clone of the PostgreSQL database that has been deployed on Google Kubernetes Engine (GKE). Then we will make changes to the clone and verify that the original database has remained unaffected by changes that were done to the clone.

Before starting with this tutorial, make sure Robin Storage is installed on GKE, your PostgreSQL database is deployed, has data loaded in it, and the Helm release is registered with Robin, and you have taken a snapshot of your PostgreSQL Helm release.

Create a clone of the PostgreSQL Helm Release on GKE

Application cloning improves the collaboration across Dev/Test/Ops teams. Teams can share app+data quickly, reducing the procedural delays involved in re-creating environments. Each team can work on their clone without affecting other teams. Clones are useful when you want to run a report on a database without affecting the source database application, or for performing UAT tests or for validating patches before applying them to the production database, etc.

Robin clones are ready-to-use “thin copy” of the entire app/database, not just storage volumes. Thin-copy means that data from the snapshot is NOT physically copied, therefore clones can be made very quickly. Robin clones are fully-writable and any modifications made to the clone are not visible to the source app/database.

Robin lets you clone not just the storage volumes (PVCs) but the entire database application including all its resources such as Pods, StatefulSets, PVCs, Services, ConfigMaps, etc. with a single command.

To create a clone from the existing snapshot created above, run the following command. Use the snapshot id we retrieved above.

robin clone create my-movies-clone Your_Snapshot_ID --wait

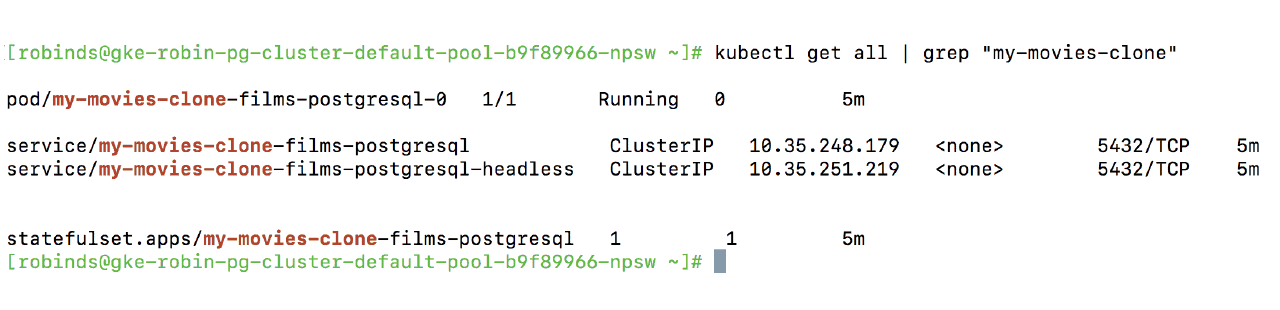

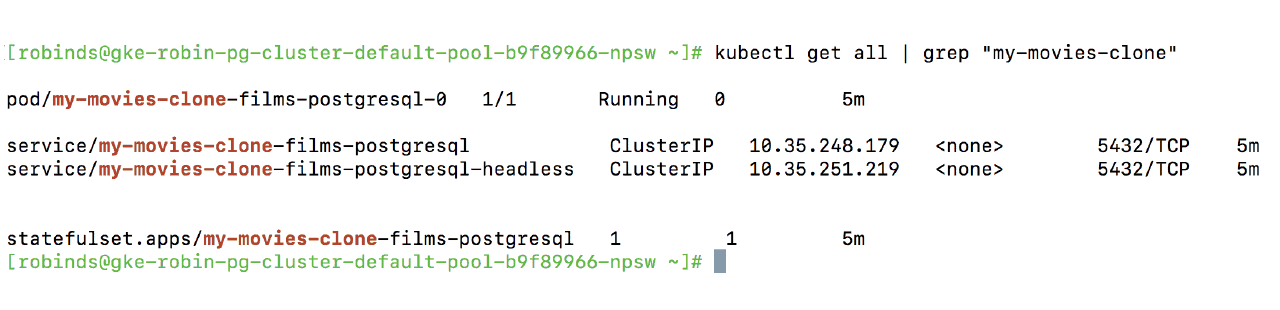

Let’s verify Robin has cloned all relevant Kubernetes resources.

kubectl get all | grep "my-movies-clone"

You should see an output similar to below.

Notice that Robin automatically clones all the required Kubernetes resources, not just storage volumes (PVCs), that are required to stand up a fully-functional clone of our database. After the clone is complete, the cloned database is ready for use.

Get Service IP address of our PostgreSQL database clone, and note the IP address.

export IP_ADDRESS=$(kubectl get service my-movies-clone-films-postgrsql -o jsonpath={.spec.clusterIP})

Get Password of our PostgreSQL database clone from Kubernetes Secret

export POSTGRES_PASSWORD=$(kubectl get secret my-movies-clone-films-postgresql -o jsonpath="{.data.postgresql-password}" | base64 --decode;

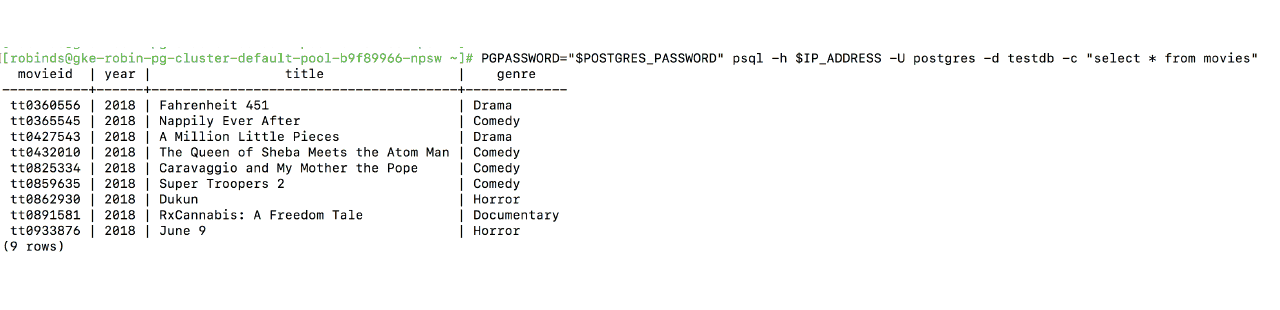

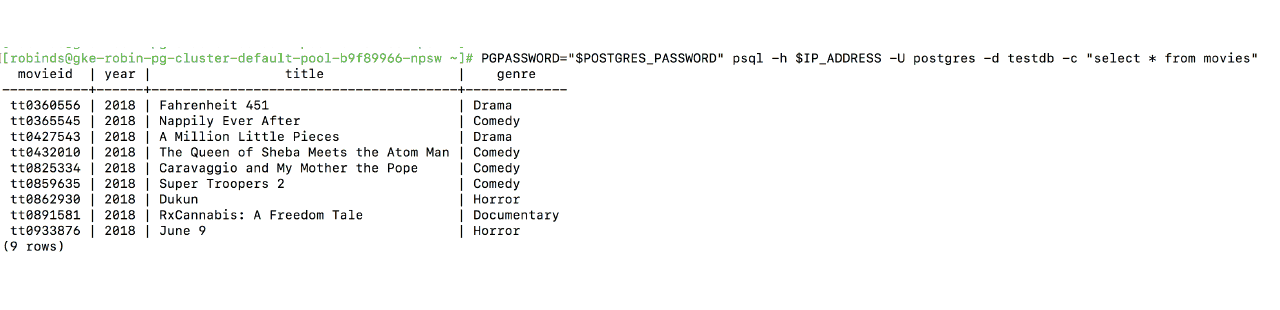

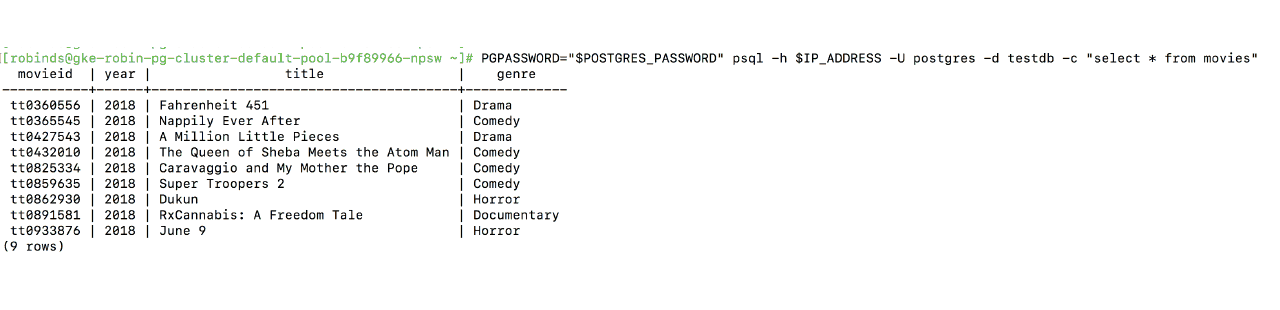

To verify we have successfully created a clone of our PostgreSQL database, run the following command.

PGPASSWORD="$POSTGRES_PASSWORD" psql -h $IP_ADDRESS -U postgres -d testdb -c "SELECT * from movies;"

You should see an output similar to the following with 9 movies.

We have successfully created a clone of our original PostgreSQL database, and the cloned database also has a table called “movies” with 9 rows, just like the original.

Now, let’s make changes to the clone and verify the original database remains unaffected by changes to the clone. Let’s delete the movie called “Super Troopers 2”.

PGPASSWORD="$POSTGRES_PASSWORD" psql -h $IP_ADDRESS -U postgres -d testdb -c "DELETE from movies where title = 'Super Troopers 2';"

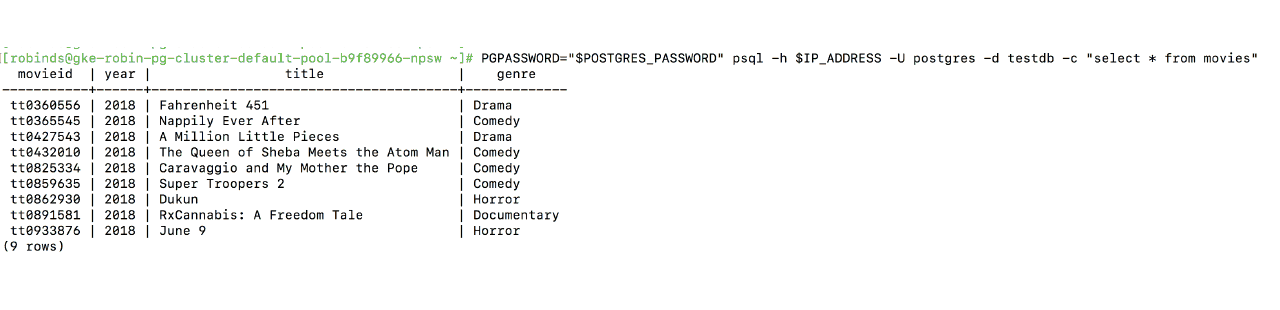

Let’s verify the movie has been deleted.

PGPASSWORD="$POSTGRES_PASSWORD" psql -h $IP_ADDRESS -U postgres -d testdb -c "SELECT * from movies;"

You should see an output similar to the following with 8 movies.

Now, let’s connect to our original PostgreSQL database and verify it is unaffected.

Get Service IP address of our original PostgreSQL database.

export IP_ADDRESS=$(kubectl get service films-postgresql -o jsonpath={.spec.clusterIP})

Get Password of our original PostgreSQL database from Kubernetes Secret.

export POSTGRES_PASSWORD=$(kubectl get secret --namespace default films-postgresql -o jsonpath="{.data.postgresql-password}" | base64 --decode;)

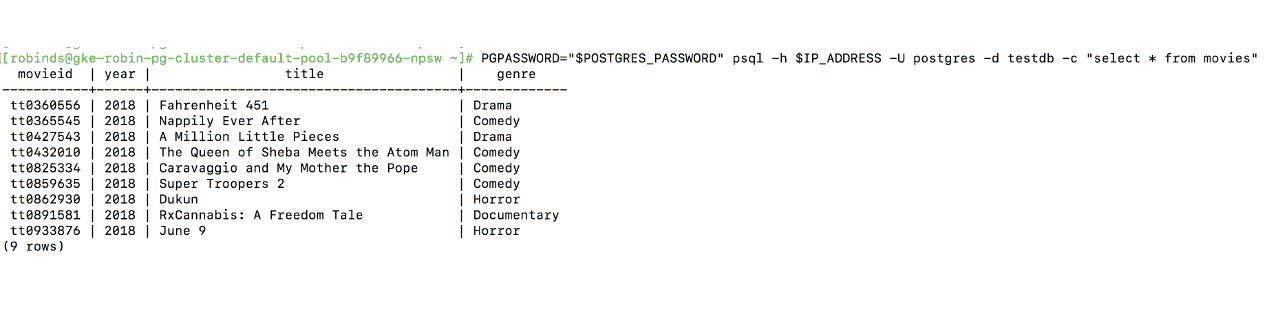

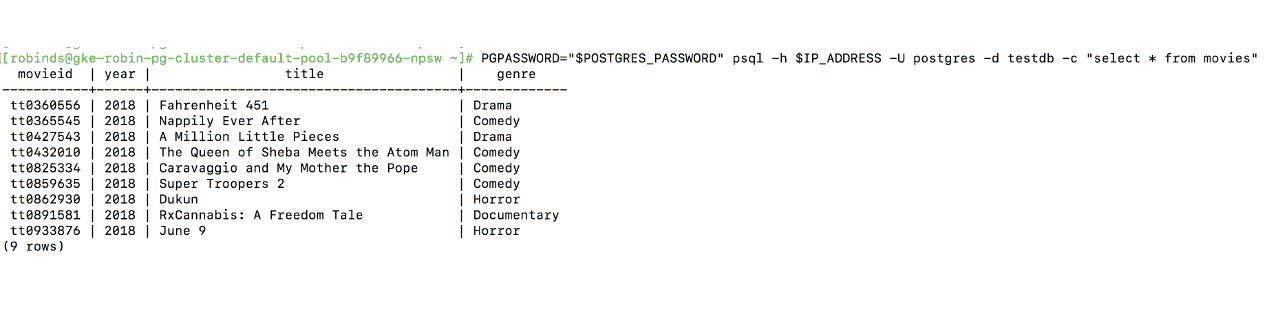

To verify that our PostgreSQL database is unaffected by changes to the clone, run the following command.

Let’s connect to “testdb” and check record :

PGPASSWORD="$POSTGRES_PASSWORD" psql -h $IP_ADDRESS -U postgres -d testdb -c "SELECT * from movies;"

You should see an output similar to the following, with all 9 movies present.

This means we can work on the original PostgreSQL database and the cloned database simultaneously without affecting each other. This is valuable for collaboration across teams where each team needs to perform a unique set of operations.

This concludes the clone PostgreSQL tutorial

Deploy PostgreSQL on GKE Tutorial

Snapshot PostgreSQL on GKE Tutorial