The Rise and Role of Application Pipelines

As enterprises adopt software-defined solutions, we’re beginning to hear more about “application pipelines.” What are application pipelines, why are they attracting attention, and how do they help businesses solve problems?

What is an application pipeline?

The term “pipeline” probably brings to mind a linear series of conduits that deliver water, sewage, oil or gas from one point to another. Or you may think of a sales pipeline as all of the sequential phases of relationship building designed to transform a cold prospect into a bona fide customer. Or, in the computer software industry, you may have heard “pipeline” used to describe a chain of data and control flow, where the output of one phase of code becomes the input of the next phase.

Likewise, an “application pipeline” is a sequence of software applications or microservices that are linked together to help an enterprise achieve a high-level business outcome that would be difficult to achieve with one monolithic software application alone. It’s a model made possible by—but not limited to—microservices orchestrated with platforms like Kubernetes. In fact, it’s pretty common to see application pipelines composed of services running in stateless containers and stateful VMs (i.e., databases).

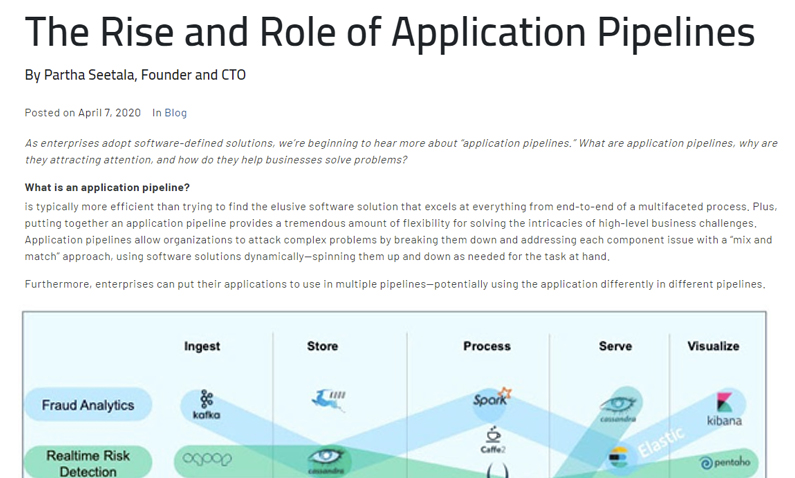

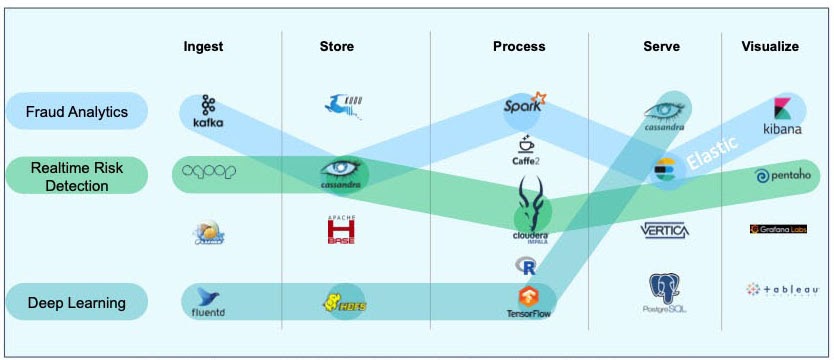

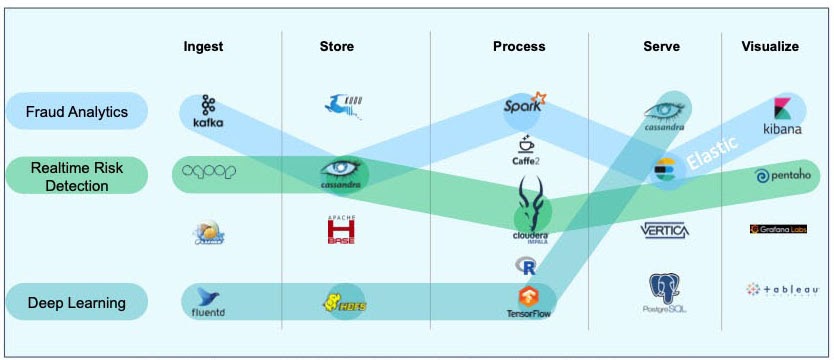

For instance, a popular application pipeline for fraud analytics is pictured below. A financial institution might use this pipeline to conduct fraud analytics on its credit card account activity. In this pipeline, Kafka ingests the credit card transaction data; Cassandra stores the data; Cloudera processes the data, and Pentaho provides a way to visualize the data.

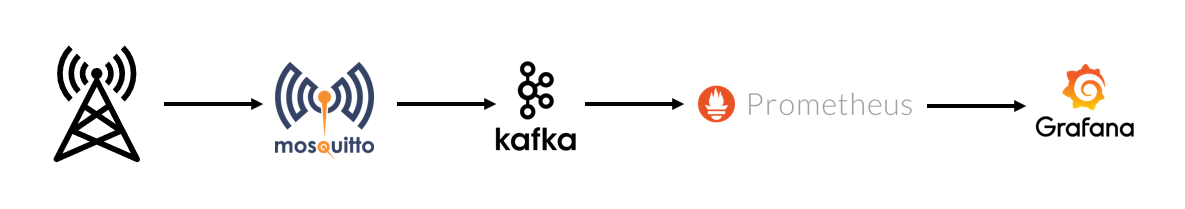

For instance, let’s consider an IoT analytics pipeline for a telecommunications equipment monitoring application. We can collect the data from the equipment sensors using MQTT on the edge server, stream the data to the datacenter using Kafka, store and query the time-series data in Prometheus, and visualize the data using Grafana:

Pipelines are a practical way to address complex business problems, because linking a series of applications that each excel in their unique function is typically more efficient than trying to find the elusive software solution that excels at everything from end-to-end of a multifaceted process. Plus, putting together an application pipeline provides a tremendous amount of flexibility for solving the intricacies of high-level business challenges. Application pipelines allow organizations to attack complex problems by breaking them down and addressing each component issue with a “mix and match” approach, using software solutions dynamically—spinning them up and down as needed for the task at hand.

Furthermore, enterprises can put their applications to use in multiple pipelines—potentially using the application differently in different pipelines.

Application Pipelines = Service Chains in Telecom

It’s probably useful here to point out that in telco terminology, you can think of service chains as application pipelines. And just like applications in an application pipeline, services in a service chain can be quite complex and network intensive. In both cases, automation plays a critical role.

It’s no wonder, then, that use of application pipelines is on the rise, with enterprises embracing the power and flexibility that application pipelines provide. However, application pipelines bring challenges of their own: all of these applications need to be provisioned on appropriate infrastructure, upgraded, backed up, etc. Thus, for application pipelines to work, IT teams must manage multiple, interconnected applications throughout their lifecycles, an inherently complex task. And, they need the ability to mix and match stateful and stateless services in an automated provisioning and management framework.

The Robin platform was designed to solve this problem with application bundles. We’ll talk more about that in the next post.